Chris Richard

DevOps, SRE, Music & More

Skills

What I'm thinking about...

Zero-Downtime Deploys on a Budget

Jan 27, 2026It finally happened to me; The tech/games industry layoff epidemic of the 2020’s reached out and smacked me right in the face. I suddenly found myself earlier this month without a job, and with the vast majority of my work from the last 8 years blocked behind a private GitHub organization I no longer had access to. I quickly realized I needed to develop a portfolio to showcase my DevOps bona fides.

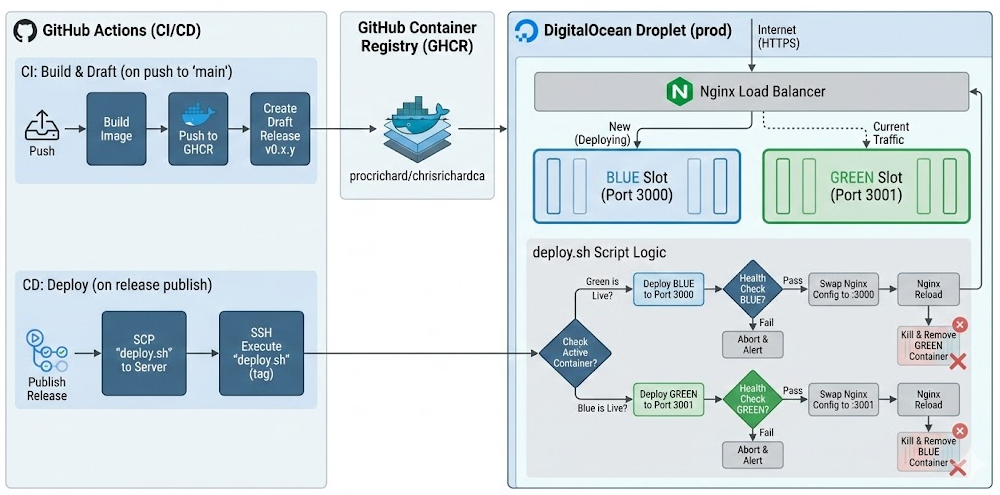

Well you’re looking at it. This site while simple on the surface, hides a robust CI/CD pipeline tuned for projects at a much greater scale. I’ll break down in detail the automation, the human-gated release process, as well as the script I use to ensure zero-downtime deployments when I push a change to production.

The Pipeline in Action

Let’s start with the high-level view of how code gets from my machine to your screen:

I’ve split the process into three distinct phases, each with a specific responsibility. For our purposes we’ll call them Gatekeeper, Builder, and Deployer.

1. Gatekeeper (Pull Requests)

Before anything hits the main branch, it has to get past the bouncer. I use a GitHub Action that triggers on every Pull Request. Its primary job right now is to enforce Conventional Commits.

If I try to merge a PR with a title like “fixed the thing”, the pipeline fails. It demands semantic clarity like fix: resolve navigation bug in header. This isn’t just me being pedantic; it’s crucial for the next step. It also runs my test suite, ensuring I don’t break existing functionality (Or it will eventually… once I have more interesting stuff to test).

2. Builder (Merge to Main)

Once code lands in main, the build workflow kicks off.

- Semantic Versioning: It analyzes the commit history since the last release. If it sees a

feat:, it bumps the minor version (e.g.,0.1.0->0.2.0). If it sees afix:, it bumps the patch. No more manual version guessing. - Dockerization: It builds a production-ready Docker image, tags the image with my new semver and pushes it to the GitHub Container Registry.

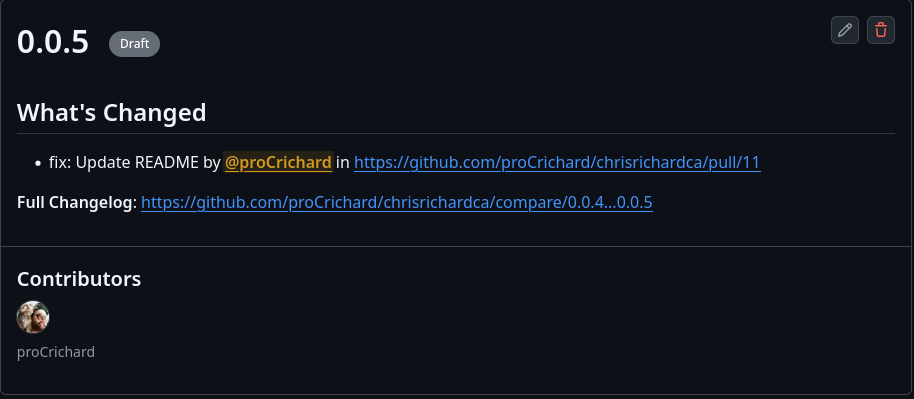

- The Draft: Instead of deploying immediately, it creates a Draft Release on GitHub. It compiles the changelog automatically and attaches the new version number. It sits there, waiting for me to publish. If I push more commits to

mainbefore publishing, the draft release is updated with a revised changelog and newer semver if necessary.

3. Deployer (Production)

This is the only manual step in the entire chain, and it’s just a button click. When I’m ready to ship, I open that Draft Release and click Publish.

This triggers the final workflow which connects to my DigitalOcean droplet via SSH to pull the new container from GHCR and run my custom deploy.sh script. It doesn’t just restart the server; it executes a Blue/Green deployment.

First, it figures out which “color” (container) is currently live by checking the running Docker processes, and prepares to launch the other one.

# Detect who is running (Blue or Green?)

if docker ps | grep -q "${APP_NAME}-blue"; then

CURRENT_COLOR="blue"

NEW_COLOR="green"

NEW_PORT="3001"

else

CURRENT_COLOR="green"

NEW_COLOR="blue"

NEW_PORT="3000"

fiNext, it boots the new container. But we don’t send users there yet. The script pauses to perform a health check on the internal port. If the app crashed on startup or I pushed some otherwise bad code, the script aborts right here. The live site (the old color) keeps serving traffic undisturbed.

# We try to fetch the homepage. If it fails (non-200), we abort.

if curl --silent --fail http://localhost:${NEW_PORT} >/dev/null; then

echo "✅ Health check passed!"

else

echo "❌ Health check FAILED. Aborting deployment."

# ... cleanup logic ...

exit 1

fiFinally, if the health check passes, we perform the “hot swap”. I use sed to update the upstream port in the Nginx configuration file and then reload Nginx.

# This regex finds 'proxy_pass http://localhost:XXXX;' and replaces the port number

sed -i "s/proxy_pass http://localhost:[0-9]*;/proxy_pass http://localhost:${NEW_PORT};/" $NGINX_CONFIG

# Reload Nginx (Zero Downtime)

nginx -s reloadThis entire process ensures that a broken deploy never sees the light of day, and a successful deploy happens instantly without dropping a single request… of the many tens of thousands this page is sure to receive. The cost of this pipeline: $0.

What’s the point?

Is this complete overkill for a personal site with minimal traffic? 100%. Do I sleep better knowing my markdown files are guarded by a blue/green deployment strategy worthy of a bank? Also yes.

Truthfully, this project is about proving a point: reliable, automated infrastructure doesn’t have to be expensive or complicated—it just has to be intentional. If you need someone who brings this level of care to your engineering team (and who is currently available for hire!), check out my resume above. Let’s chat.